Build AI agents with confidence

The AI platform for enterprises. Lunary gives you the tools that top teams use to ship and scale AI with confidence.

Powering the world's best AI teams.

From next-gen startups to established enterprises.

Chatbot Analytics

Understand the gap between your chatbot and your users.

See how your LLMs performs in real-time and how users interact with it.

Deliver reliable AI experiences.

Built for every LLM use-case. Whether you're building internal tools or customer-facing applications.

Minutes to magic.

Self-host or go cloud and get started in minutes.

Own Your Data

Self Hostable

1-line Integration

Prompt Templates

Chat Replays

Analytics

Topic Classification

Agent Tracing

Custom Dashboards

Score LLM responses

PII Masking

Feedback Tracking

Own Your Data

Self Hostable

1-line Integration

Prompt Templates

Chat Replays

Analytics

Topic Classification

Agent Tracing

Custom Dashboards

Score LLM responses

PII Masking

Feedback Tracking

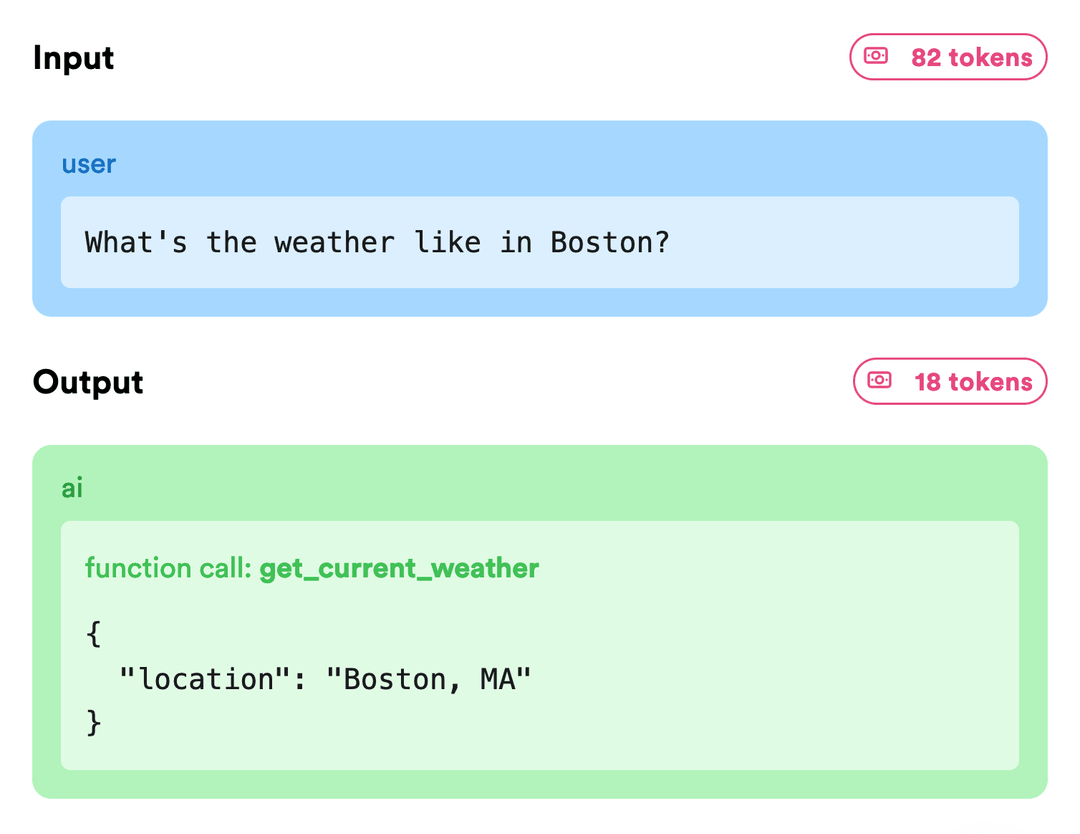

Debug LLM agents

Log all your prompts and results, see how agents are performing in production.

- Traces & error stack traces

- Instant search & filters

- Label data for fine-tuning

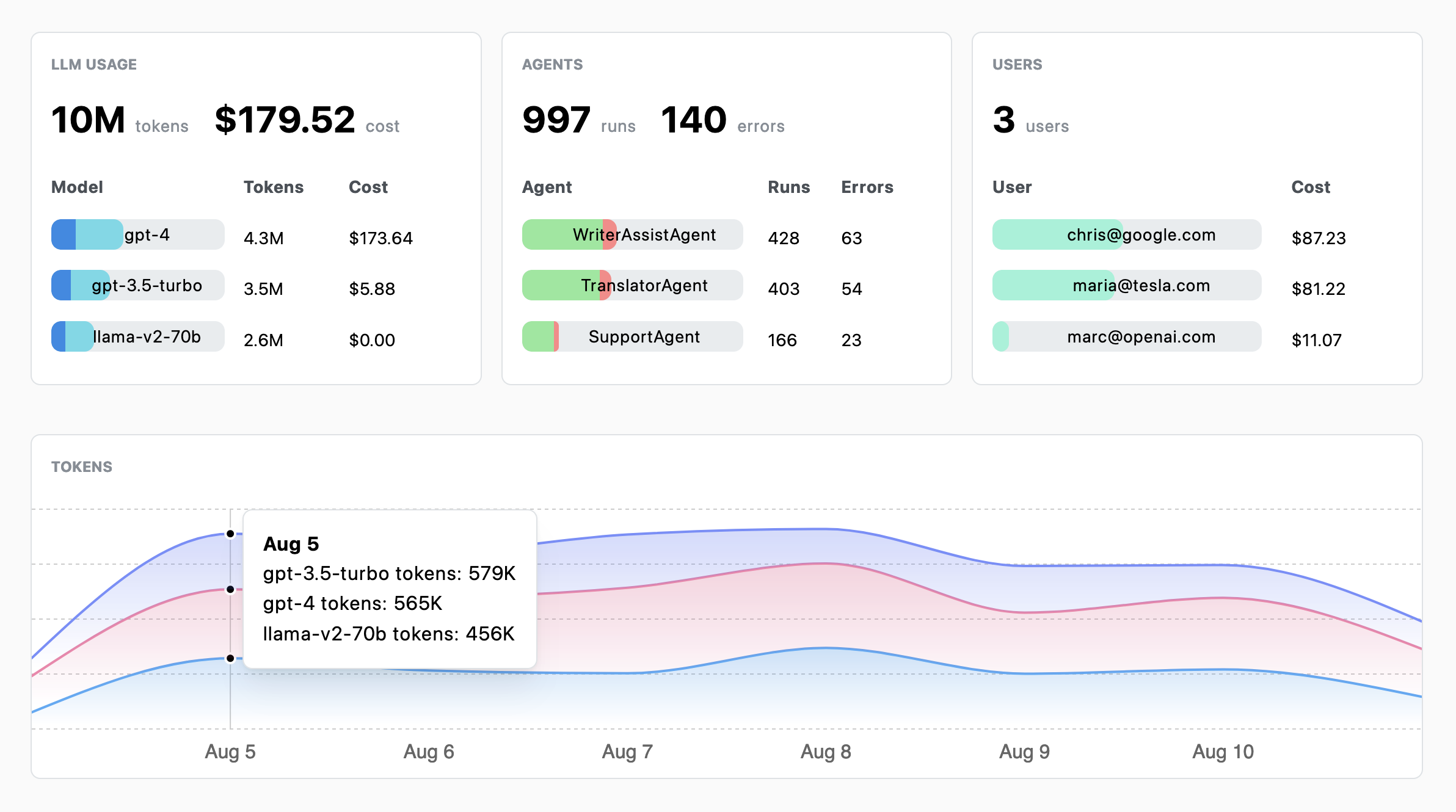

Analytics

Track and analyze the performance and costs of your GenAI projects, and how users interact with your app.

- Model usages & costs

- Analyze frequent topics

- User satisfaction

- Custom dashboards

Iterate on prompts

Create templates and collaborate on prompts with non-technical teammates.

- Clean your source-code

- Versioning

- A/B testing

Security

Enterprise ready

Lunary is SOC 2 Type II and ISO 27001 certified and designed to be self-hosted. Stay compliant and safeguard your sensitive data.

PII Masking

Safeguard user personal information. No more GDPR headaches.

Manage Access

RBAC and SSO. The right eyes on the right data, always.

Hosted in your Cloud

Deploy in your VPC with Kubernetes or Docker. Your infrastructure, your control.

SDKs

Any LLM. Any framework.

Seamless integration with zero friction. Our SDKs are designed to be lightweight and integrate naturally into your codebase.

Integrations

Capture LLM data across your entire stack.

Monitor and analyze AI interactions across your stack, with seamless integration to your preferred data destinations.

Tools

And much more.

Allow your team to judge responses from your LLMs.

Safeguard user personal information. No more GDPR headaches.

Understand what languages your users are speaking.

Full support for text, audio and images.

Receive notifications when agents are not performing as expected.

Search and filter anything. In milliseconds.

Experiment with prompts and LLM models. No coding required.

Group chats into topics. Uncover trends instantly.

Testimonials

Lunary is trusted by the best teams, from next-gen startups to established enterprises.

Minutes to magic.

Self-host or go cloud and get started in minutes.

Own Your Data

Self Hostable

1-line Integration

Prompt Templates

Chat Replays

Analytics

Topic Classification

Agent Tracing

Custom Dashboards

Score LLM responses

PII Masking

Feedback Tracking

Own Your Data

Self Hostable

1-line Integration

Prompt Templates

Chat Replays

Analytics

Topic Classification

Agent Tracing

Custom Dashboards

Score LLM responses

PII Masking

Feedback Tracking